What DeepSeek Means For Open-Source AI

페이지 정보

본문

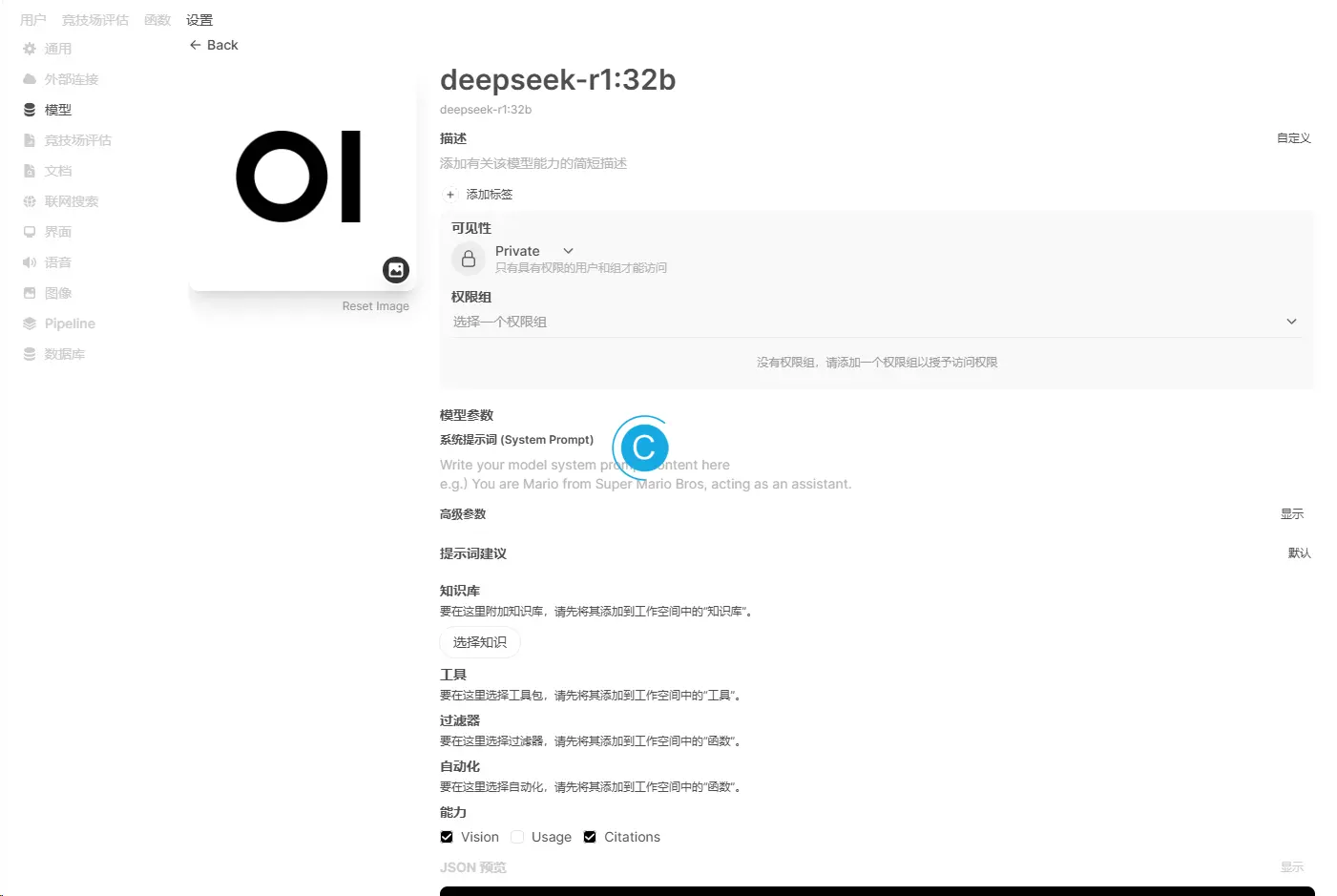

DeepSeek, an organization primarily based in China which aims to "unravel the mystery of AGI with curiosity," has launched DeepSeek LLM, a 67 billion parameter model trained meticulously from scratch on a dataset consisting of 2 trillion tokens. DeepSeek, the AI offshoot of Chinese quantitative hedge fund High-Flyer Capital Management, has officially launched its newest mannequin, DeepSeek-V2.5, an enhanced version that integrates the capabilities of its predecessors, DeepSeek-V2-0628 and DeepSeek-Coder-V2-0724. Watch out with DeepSeek, Australia says - so is it protected to make use of? This compression permits for extra environment friendly use of computing assets, making the mannequin not only highly effective but additionally highly economical by way of resource consumption. Built on V3 and primarily based on Alibaba's Qwen and Meta's Llama, what makes R1 fascinating is that, in contrast to most other prime models from tech giants, it's open source, that means anyone can download and use it. Liang stated in a July 2024 interview with Chinese tech outlet 36kr that, like OpenAI, his company needs to realize normal synthetic intelligence and would keep its models open going ahead. The world remains to be reeling over the discharge of DeepSeek-R1 and its implications for the AI and tech industries.

DeepSeek, an organization primarily based in China which aims to "unravel the mystery of AGI with curiosity," has launched DeepSeek LLM, a 67 billion parameter model trained meticulously from scratch on a dataset consisting of 2 trillion tokens. DeepSeek, the AI offshoot of Chinese quantitative hedge fund High-Flyer Capital Management, has officially launched its newest mannequin, DeepSeek-V2.5, an enhanced version that integrates the capabilities of its predecessors, DeepSeek-V2-0628 and DeepSeek-Coder-V2-0724. Watch out with DeepSeek, Australia says - so is it protected to make use of? This compression permits for extra environment friendly use of computing assets, making the mannequin not only highly effective but additionally highly economical by way of resource consumption. Built on V3 and primarily based on Alibaba's Qwen and Meta's Llama, what makes R1 fascinating is that, in contrast to most other prime models from tech giants, it's open source, that means anyone can download and use it. Liang stated in a July 2024 interview with Chinese tech outlet 36kr that, like OpenAI, his company needs to realize normal synthetic intelligence and would keep its models open going ahead. The world remains to be reeling over the discharge of DeepSeek-R1 and its implications for the AI and tech industries.

This ensures that customers with excessive computational demands can nonetheless leverage the mannequin's capabilities efficiently. The reward for DeepSeek-V2.5 follows a nonetheless ongoing controversy round HyperWrite’s Reflection 70B, which co-founder and CEO Matt Shumer claimed on September 5 was the "the world’s prime open-source AI model," based on his internal benchmarks, only to see those claims challenged by unbiased researchers and the wider AI research neighborhood, who have to date failed to reproduce the said outcomes. AI observer Shin Megami Boson, a staunch critic of HyperWrite CEO Matt Shumer (whom he accused of fraud over the irreproducible benchmarks Shumer shared for Reflection 70B), posted a message on X stating he’d run a personal benchmark imitating the Graduate-Level Google-Proof Q&A Benchmark (GPQA). DeepSeek LLM 67B Base has showcased unparalleled capabilities, outperforming the Llama 2 70B Base in key areas reminiscent of reasoning, coding, arithmetic, and Chinese comprehension. Access to intermediate checkpoints during the bottom model’s coaching process is offered, with utilization topic to the outlined licence terms. From 2020-2023, Deepseek AI Online chat the principle factor being scaled was pretrained models: models skilled on rising quantities of web textual content with a tiny little bit of other training on prime.

Meanwhile, DeepSeek additionally makes their fashions out there for inference: that requires an entire bunch of GPUs above-and-past whatever was used for coaching. KELA’s Red Team successfully jailbroke DeepSeek utilizing a mixture of outdated strategies, which had been patched in different fashions two years ago, in addition to newer, more advanced jailbreak strategies. DeepSeek’s lesson is that the best engineering optimizes for 2 issues: performance and cost. This is cool. Against my personal GPQA-like benchmark deepseek v2 is the precise best performing open source mannequin I've examined (inclusive of the 405B variants). Notably, the model introduces operate calling capabilities, enabling it to work together with external instruments more effectively. We shortly observed that this flavor of DeepSeek refusal supersedes the reasoning operate of the model. I have mentioned the operate call many instances in my earlier article, we already know that the perform call is a method that enables LLM to autonomously select and call predefined functions based on the dialog content. Have you learnt what a baby rattlesnake fears? Conventional wisdom holds that large language models like ChatGPT and DeepSeek need to be skilled on increasingly high-quality, human-created textual content to improve; DeepSeek took one other strategy.

Instruction-following evaluation for giant language fashions. Chinese fashions are making inroads to be on par with American fashions. By way of language alignment, DeepSeek-V2.5 outperformed GPT-4o mini and ChatGPT-4o-latest in internal Chinese evaluations. In-depth evaluations have been performed on the bottom and chat fashions, evaluating them to current benchmarks. The analysis group is granted entry to the open-supply variations, DeepSeek LLM 7B/67B Base and DeepSeek LLM 7B/67B Chat. Indeed, if DeepSeek had had access to even more AI chips, it may have skilled a more powerful AI mannequin, made sure discoveries earlier, and served a bigger person base with its present models-which in flip would improve its income. Noting the rise in self-hosted AI, the report indicated that amongst essentially the most prevalent model types, BERT has become even more dominant, rising from 49% to 74% year-over-year. This mannequin achieves state-of-the-art efficiency on multiple programming languages and benchmarks. Deepseek Online chat does cost companies for access to its application programming interface (API), which allows apps to talk to each other and helps developers bake AI models into their apps. Its state-of-the-artwork performance across numerous benchmarks signifies strong capabilities in the most typical programming languages. DeepSeek-R1, launched in January 2025, focuses on reasoning duties and challenges OpenAI's o1 mannequin with its superior capabilities.

Instruction-following evaluation for giant language fashions. Chinese fashions are making inroads to be on par with American fashions. By way of language alignment, DeepSeek-V2.5 outperformed GPT-4o mini and ChatGPT-4o-latest in internal Chinese evaluations. In-depth evaluations have been performed on the bottom and chat fashions, evaluating them to current benchmarks. The analysis group is granted entry to the open-supply variations, DeepSeek LLM 7B/67B Base and DeepSeek LLM 7B/67B Chat. Indeed, if DeepSeek had had access to even more AI chips, it may have skilled a more powerful AI mannequin, made sure discoveries earlier, and served a bigger person base with its present models-which in flip would improve its income. Noting the rise in self-hosted AI, the report indicated that amongst essentially the most prevalent model types, BERT has become even more dominant, rising from 49% to 74% year-over-year. This mannequin achieves state-of-the-art efficiency on multiple programming languages and benchmarks. Deepseek Online chat does cost companies for access to its application programming interface (API), which allows apps to talk to each other and helps developers bake AI models into their apps. Its state-of-the-artwork performance across numerous benchmarks signifies strong capabilities in the most typical programming languages. DeepSeek-R1, launched in January 2025, focuses on reasoning duties and challenges OpenAI's o1 mannequin with its superior capabilities.

If you loved this informative article and you want to receive more details concerning deepseek français kindly visit the site.

- 이전글ㅋr톡010-7903-4734>>의령출장샵 의령립까페 의령조건만남 의령모텔콜걸 의령출장아가씨/폐이만남 25.03.22

- 다음글Never Lose Your Sabung Ayam Online Again 25.03.22

댓글목록

등록된 댓글이 없습니다.